Week 7 means winter vacation for many, and that applies to us at Viden.AI as well - although there are a few student submissions that need to be graded. Nevertheless, there were a few news stories that were too interesting for us to completely relax.

Especially the story that Version2 published last week about security flaws in ExamCookie, and that the system can be easily circumvented.

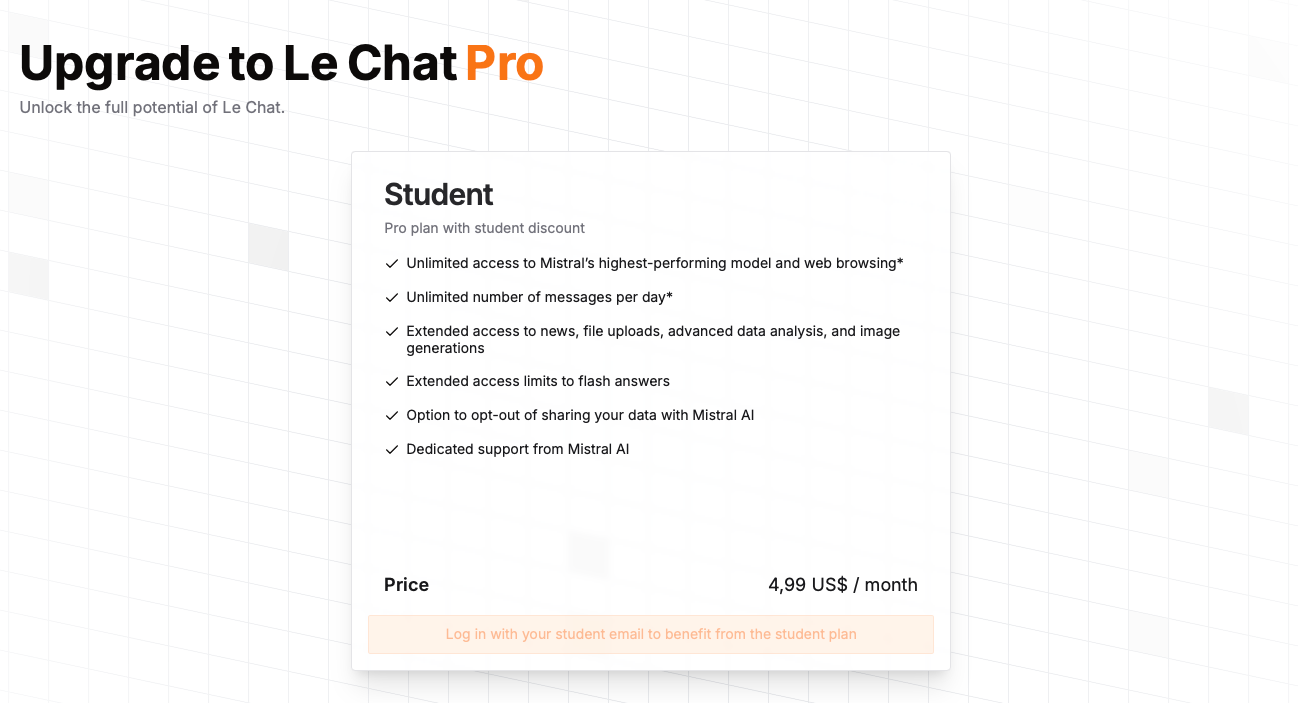

New language models are continuously being developed along with new opportunities to utilize them in teaching. It is particularly interesting that the French company Mistral AI has released an EDU version of Le Chat Pro, where students can use it for about 35 DKK per month. At the same time, there are new initiatives underway to develop good open-source European language models.

The use of AI is a significant environmental burden, and in its current form, this is likely correct. However, researchers from SDU are working on a method that could significantly reduce energy consumption. Specifically, they are testing a technique that minimizes the number of bits per parameter in language models. It may sound technical, but fundamentally it is about switching from calculations with decimal numbers to whole numbers - a change that can make AI much more energy efficient.

At the University of Bergen, doubts have arisen about whether a lecturer has used AI to grade students' exams and provide feedback on their exam papers.

Sam Altman, CEO of OpenAI, participated in the podcast Re:Thinking, where they discuss what skills humans should have in a future characterized by AI.

Furthermore, there are a number of other news stories that could be interesting to delve into.

Happy reading!

HTX students reveal security flaws in ExamCookie

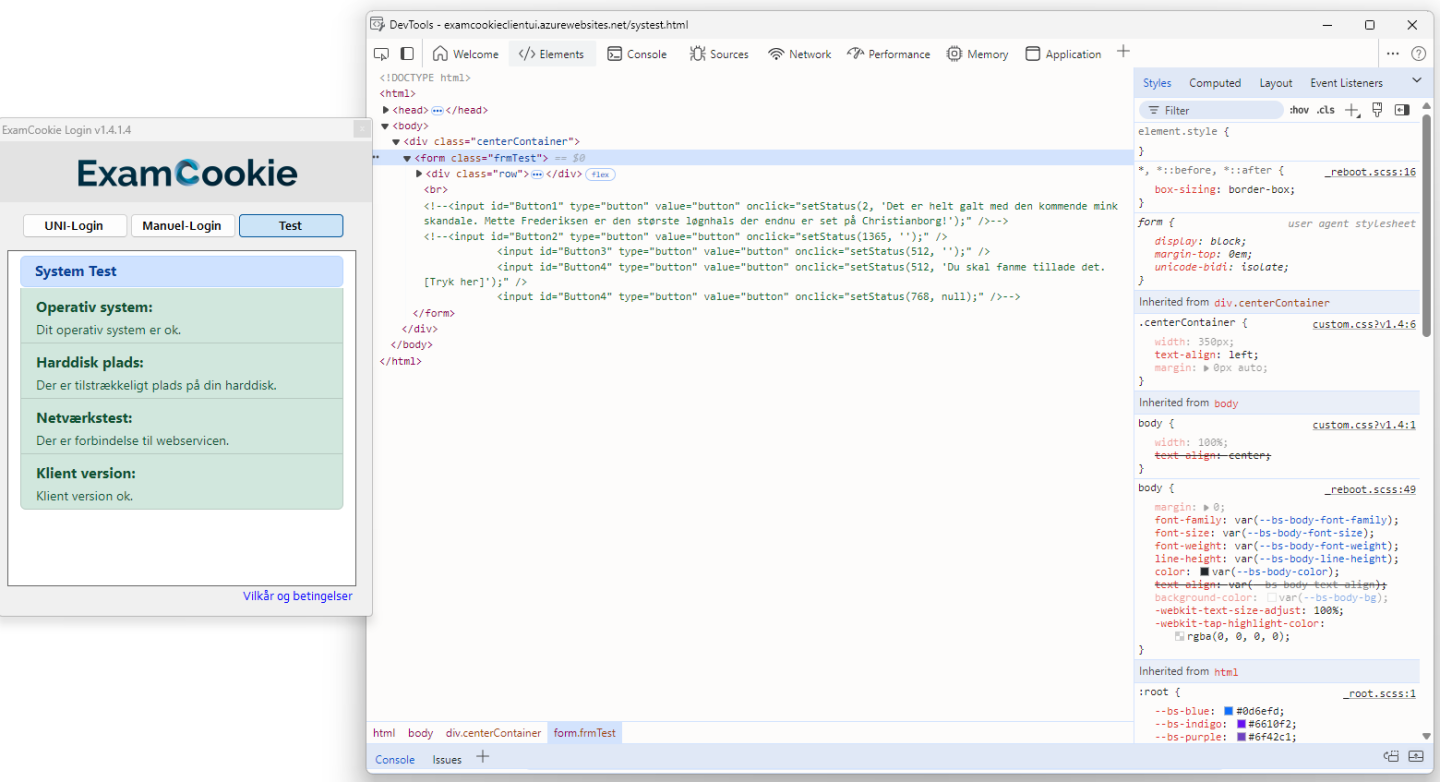

Version2 reports that two 3rd-year students from HTX have discovered that the code behind the exam program ExamCookie can be easily accessed and analyzed. They have published a guide on GitHub on how to bypass the program's monitoring features using DLL injection techniques. They also demonstrate that one can simply rename their internet browser to evade monitoring and use AI tools like ChatGPT without being detected.

The students demonstrate significant vulnerabilities in ExamCookie, including the ability to disable screenshot features and clipboard logging. Two IT security experts express concern, as this could potentially make it harder to detect cheating during exams.

Moreover, other students found a remarkable comment in ExamCookie's HTML code: “It is completely wrong with the upcoming mink scandal. Mette Frederiksen is the biggest liar yet seen in Christiansborg!” According to a security expert, the comment indicates low coding standards and a "serious product."

The director of ExamCookie was not aware of the comment, which has now been removed. He also dismisses the criticism, asserting that it is only theoretically possible to circumvent the program. Schools will be alerted if students attempt to cheat, he states. In response to the criticism, ExamCookie has released an updated version with enhanced security.

The case raises questions about whether technological solutions can effectively prevent cheating during exams, and whether there is a need for a more thorough revision of exam formats in light of AI developments.

Behind paywall

Mistral AI launches mobile app

The French company Mistral AI has launched its AI assistant Le Chat as a mobile app for iOS and Android. Previously, Mistral primarily focused on businesses and professional users, but now the company is directly competing with OpenAI’s ChatGPT, Google Gemini, and the Chinese AI assistant DeepSeek.

For the education sector, Mistral offers an EDU license for about 35 DKK per month, providing students and educators with a more open and tailored AI solution compared to the closed systems from American actors. Alternatively, the system can also be used for free, but then one accepts that their data is used to improve the system.

European collaboration on open language models

A consortium of 20 leading European research institutions, companies, and EuroHPC centers has entered into a collaboration to develop a family of open, multilingual language models under the project OpenEuroLLM. The initiative aims to strengthen Europe’s digital sovereignty and competitiveness in AI by making advanced language models available to businesses, industry, and public organizations.

Researchers work to make AI searches more sustainable

The use of AI chatbots like ChatGPT requires significant amounts of energy and water, which has an environmental cost. A single search can consume many times more energy than a Google search, and as millions of people use chatbots daily, this results in enormous total consumption.

To address this challenge, researchers from the Department of Mathematics and Computer Science at the University of Southern Denmark are working on making large language models more energy-efficient. Lukas Galke and Peter Schneider-Kamp have been granted resources on the supercomputer LEONARDO to develop more sustainable models. They focus on reducing energy consumption during inference - that is, during user interaction - rather than during the training of models.

The researchers hope to minimize the number of bits per parameter in language models, which could significantly reduce energy consumption. If their method succeeds, a search could potentially become 30 times more energy efficient than today.

University cancels grading after suspicion of errors

35 out of 71 students at the University of Bergen will receive new grading for their exam in European history and politics. The decision comes after complaints regarding grading justifications raised concerns within the university's administration.

Several students speculated whether the examiner had used AI to assess the exam papers, but the university emphasizes that the complaints do not directly mention AI. However, after a series of random checks, the university could not confirm that the grading had been conducted in a professionally responsible manner and therefore decided to cancel the assessments for the affected students.

Podcast: The ability to ask questions will become the most important skill in the age of AI

Sam Altman, CEO of OpenAI, predicts that AI will fundamentally reshape the economy and labor market. In a conversation with psychologist Adam Grant on the podcast Re:Thinking, Altman emphasizes that raw intelligence will no longer be the most important skill in the future job market. Instead, the ability to ask good questions and connect information in new ways will be crucial.

Altman explains that intelligence was previously measured by how much knowledge a person could remember. But in an age where AI can store and recall information far better than humans, it is more important to identify patterns and connections rather than just memorizing facts. He compares this development to the rise of the internet, where teachers in his school days tried to ban the use of Google in teaching. Instead of making us dumber, it enabled us to solve more complex tasks.

Grant summarizes Altman's point by stating that it will become more important to be a “connector” of information than a “collector” of facts. Creativity and the ability to see connections across different fields of knowledge will be central competencies in the age of AI.

This week's other news

This article has been machine-translated into English. Therefore, there may be nuances or errors in the content. The Danish version is always up-to-date and accurate.