Welcome to a new week filled with exciting news about artificial intelligence and education! This time, we focus on young people's experiences with ChatGPT and the challenges of identifying AI-generated texts. We also take a closer look at the management of AI from a leadership perspective, as well as the potential consequences of Trump's policies for data transfers between the EU and the USA.

We also link to a number of relevant news articles from both domestic and international sources, and there’s room for more nerdy news about DeepSeek and Google's Titans.

Happy reading!

- A new study shows that young people in upper secondary education benefit from AI as a learning partner, while also struggling with the temptation to take shortcuts.

- A new article on Videnskab.dk explores the possibilities for identifying whether a text has been written by AI.

- The podcast Pilestrædet features Martin Exner in the studio discussing SkoleGPT.

- Mikkel Aslak criticizes, in a blog post, the proposal to use analog exams as a solution to AI cheating.

- Jeppe Stricker investigates the challenges leaders face in working with generative AI.

- "AI-Frede" makes teaching more engaging at SOSU Nykøbing Falster.

This week's nerdy section:

- Actions by the Trump administration threaten TADPF, which could make it illegal to use American cloud services.

- Stanford researchers have developed AI agents that can simulate human behavior.

- The Chinese startup Deepseek puts pressure on Meta with cheaper and more efficient AI models.

- Google's Titans bring AI closer to human cognition by combining short-term and long-term memory.

Young people balance opportunities and challenges with AI in education

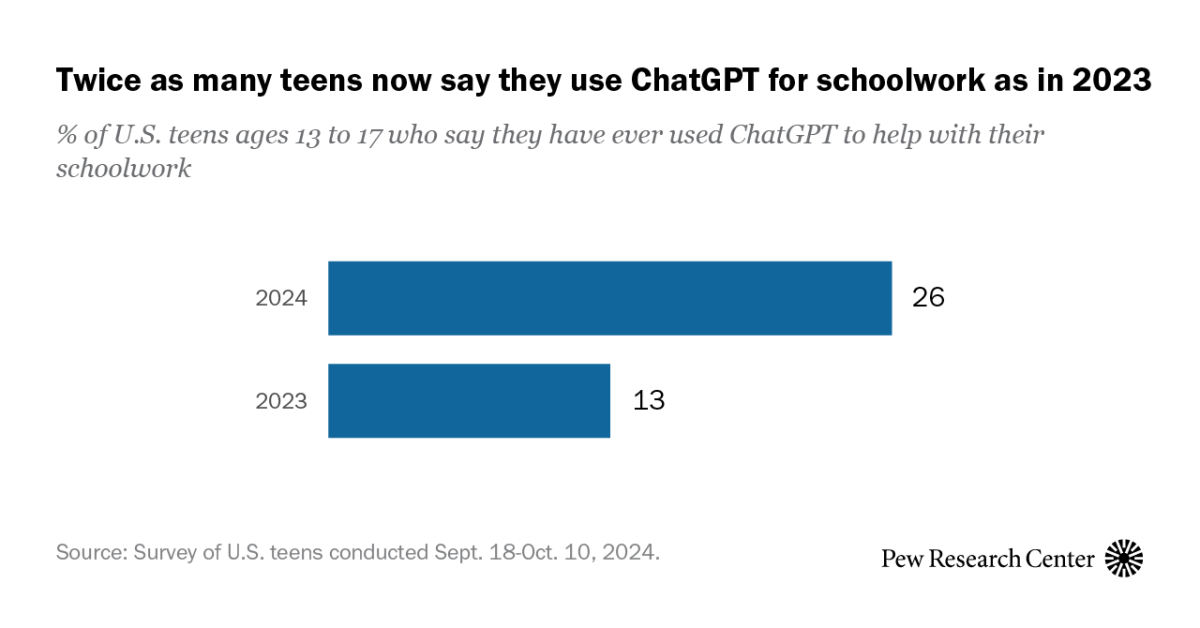

Students in upper secondary education are increasingly using ChatGPT for both daily tasks and schoolwork. Many see the technology as a valuable learning partner, particularly for assignments like the larger written SRP project, but also as a potential obstacle to deep learning and critical thinking. Studies show that easy access to AI can lead to cognitive offloading and less reflection, which raises concerns among experts.

A new report from Democracy X and the Data Ethical Council, in collaboration with TrygFonden, reveals that young people are very aware of both the benefits and pitfalls of AI. While many view AI as a time-saving aid, some feel that the technology can undermine their self-discipline and ability to learn. Meanwhile, students experience a lack of a common approach among educators regarding how AI should be used in teaching. One in five students report that teachers have different rules for AI use, and many are calling for common guidelines.

Birgitte Vedersø argues that AI can be constructively used in teaching if assignments are designed to promote reflection and critical thinking. She also calls for a cultural reckoning with the current performance culture, which may lead to over-reliance on AI for quick results.

The study suggests that students are both open and reflective about the potential of the technology but need help finding a balance between using AI as a learning tool without losing their motivation and independence.

Download the report here:

Videnskab.dk: How do you discover if a text is written by AI?

Videnskab.dk has written an informative article about the challenges of identifying AI-generated texts at a time when AI-generated content is becoming increasingly widespread. According to Videnskab.dk, there are still no reliable methods for identifying AI-generated texts.

Experts emphasize that methods such as “token-likelihood” analyses and watermarking can be useful, but are often impractical or easy to circumvent. The article also explains how malicious actors can leverage publicly available models and datasets to generate synthetic content in multiple languages at very low costs.

One of the main points of the article is that it is crucial to critically assess unknown information sources. Additionally, working on global solutions such as mandatory watermarking and centralized registries for tracking synthetic texts is recommended. The article further encourages broader discussions about responsible AI development and the importance of educating the population on source criticism and technology understanding.

Podcast: Chatbot, write my school assignment

In the podcast Pilestrædet, published by Berlingske, Martin Exner from the Center for Teaching Materials discusses the possibilities of using SkoleGPT in teaching. SkoleGPT differs from other AI tools, such as ChatGPT, by being data secure, open-source, and free for schools to use. The purpose of SkoleGPT is to provide teachers and students with a tool that promotes learning and creativity while eliminating the risk of data breaches or illegal use.

In the podcast, Exner emphasizes that the technology can be used for tasks such as idea development and simulation, but stresses the importance of teaching critical use of AI. The podcast also addresses challenges such as the risk of cheating and the loss of students' independent thinking. However, Exner points out that schools should embrace AI as an integrated part of modern education.

Analog exams as a response to AI create challenges

In a blog post, Mikkel Aslak criticizes the proposal to introduce more analog exams as a solution to cheating with generative AI. He points out that such exams may promote rote learning and hinder students' critical thinking and digital literacy. Aslak argues that written and oral assessments should be combined, and that AI should be used as a tool in the writing process to better prepare students for the future.

Additionally, he highlights that the current exam system already faces challenges, including significant variations in grading. Instead, he calls for rethinking assessment formats by combining written products with dialogues between student, teacher, and examiner, although this may be both more costly and complex.

Jeppe Stricker writes about leadership and generative AI

On his blog, The Future of Higher Education, Jeppe Stricker has written an article that highlights the challenges and opportunities that leaders face when working with generative AI. The article emphasizes how the technology can revolutionize decision-making processes, improve efficiency, and free up resources. At the same time, it demands new skills and critical thinking to handle the accompanying ethical dilemmas and risks.

Stricker argues that leaders should gain a deep understanding of the potential and limitations of generative AI in order to implement the technology strategically and responsibly. The article stresses that AI is not just a tool, but also a game changer that necessitates adjustments to the organization's culture and leadership style.

AI as part of the teaching at SOSU Nykøbing Falster

SOSU Nykøbing Falster has taken a significant step towards integrating AI in education. As part of a three-year development project, AI has been introduced through interactive, animated cases as a supplement to traditional written assignments. One example is "Frede," an AI-generated character that makes teaching more engaging by sharing stories about his life and challenges directly with students. The initiative targets students who learn best through emotional interaction, but traditional cases are still available as an alternative.

In addition to the animated cases, AI is also used as a tool to provide feedback on students' assignments. Chatbots can assist students with academic feedback and improvement suggestions, which can enhance their work before submission. However, the focus is on ensuring that AI functions as a learning tool and not as a means to complete the assignments for students.

The project involves significant ethical considerations, and there is active work on topics like source criticism and GDPR to ensure responsible implementation of AI in education. The school's leadership sees great potential in the development – both in strengthening education and preparing students for a modern labor market where technology plays a central role.

Trump threatens EU-US data agreement

The new Trump administration has created uncertainty around the central EU-US data agreement, the Transatlantic Data Privacy Framework (TADPF). The agreement, which was made in July 2023, was supposed to ensure lawful data transfers between the EU and the US, but now risks being annulled.

TADPF was adopted to resolve issues with previous data agreements that the EU Court ruled illegal due to extensive US surveillance laws. However, TADPF relies on promises and declarations from the Biden administration, and President Trump has already announced a review with the possibility of removing these within 45 days. It is important to highlight that the agreement does not immediately become invalid, even if key elements are removed. The agreement requires a formal annulment from the EU before it is officially revoked, creating a temporary legal gray area.

Max Schrems, known for his lawsuits regarding data protection, warns that the agreement is “built on sand” and could easily collapse. If that happens, many businesses and public institutions in the EU could find themselves in an uncertain legal situation.

If the agreement falls, it may become illegal to use American cloud services like Google Workspace, Microsoft Teams, and Amazon Web Services. This would have significant consequences for Danish schools that currently use these services in daily teaching and administration. To avoid problems, it is recommended that schools and businesses consider using European providers or ensure that data is exclusively stored and processed within the EU.

Stanford researchers create AI agents based on the personalities of 1,052 people

Stanford researchers have developed AI agents that can simulate personalities with high accuracy based on interviews with 1,052 individuals. The agents answer questions and make decisions in line with their human counterparts. They can be used as a testing environment to explore societal issues like climate change and pandemic policies.

Deepseek challenges Meta with cheaper and more effective AI models

The Chinese AI company DeepSeek has developed an open-source model, DeepSeek-R1, that surpasses leading AI models like OpenAI's in several areas. DeepSeek has achieved this despite limited resources and without access to the latest advanced chips due to US export restrictions. Instead, the company has focused on optimizing its software and introducing more efficient methods for training AI models. For example, the model uses only one-tenth of the computing power required by Meta's Llama 3.1.

DeepSeek has developed innovative methods, such as Multi-head Latent Attention and Mixture-of-Experts, that reduce the need for large datasets and advanced hardware. The company's approach is also characterized by an open-source strategy, which has attracted attention from the global AI research community. Experts believe this development could challenge US export controls, as DeepSeek has demonstrated that advanced AI models can be developed with fewer resources through software optimization.

Google introduces Titans: AI with human-like memory

Google introduces Titans: AI with human memory. Google has launched a new AI architecture called Titans, which combines short- and long-term memory to mimic human cognition. Titans builds on the transformer architecture that underlies modern AI but adds a “neural long-term memory” and a learning metric that prioritizes crucial or unexpected events. This allows Titans to process large amounts of data and solve complex tasks more efficiently than previous models.

With a new approach to memory management and increased flexibility in processing historical data, Titans can analyze large documents, detect anomalies, and remember important context over longer periods. Tests show that Titans outperform existing models in areas like language understanding, time series, and even DNA modeling. The technology has the potential to revolutionize research, medical diagnostics, and many other fields.

Other news of the week

This article has been machine-translated into English. Therefore, there may be nuances or errors in the content. The Danish version is always up-to-date and accurate.